Over four weeks of QUT Motorsport Design Camp during the uni holidays, our team worked on redesigning the steering actuator system for the QEV-5 autonomous Formula SAE vehicle.

The changes we developed during Design Camp 2026 will feed directly into this year’s vehicle, QEV-6, which will compete at the end of the year.

To be honest, I hadn’t originally planned on joining Motorsport. I assumed it was mostly mechanical — chassis, suspension, brakes — and as an electrical student I wasn’t sure where I’d fit. But once I realised there was serious electrical and autonomous work happening, I thought it would be a good opportunity to deepen my knowledge and get involved in something hands-on over the holidays.

I was brought in to help work on designing a new steering actuator.

At the time, I didn’t even fully understand what that meant.

On paper, the task sounded straightforward:

Select a motor that meets the torque and speed requirements.

But as I started getting my head around the problem — and as we worked through it as a team — we realised something important:

We didn’t really know enough about the problem.

What initially looked like a component selection exercise quickly became a broader systems engineering problem involving modelling, physical validation, FEA, track testing, and cross-team trade-offs.

The deeper we went, the clearer it became that this wasn’t about “picking a motor.” It was about understanding the system well enough to justify whatever we chose.

Realising We Didn’t Really Know the Requirements

QUT Motorsport has a lot of history behind it.

There are years worth of previous Design Camps and thesis projects by students who went far deeper into specific subsystems than we were at this stage. We spent time digging through that work and trying to extract as much value as we could — both from our own archives and from other Formula SAE teams locally and internationally.

We found useful ideas.

We got a sense of positioning strategies.

We saw different actuator configurations.

We understood how others had approached packaging.

But what we didn’t get was something we could confidently validate against our own vehicle.

The torque data we had was based on static testing. We could get a “vibe” for what worked — but not hard numbers we could rely on.

Once we started properly thinking through the actuator selection, we realised that without reliable steering load data, any motor choice would be built on shaky foundations.

So as a team, we made the call to slow down.

Instead of designing around assumptions, we committed to measuring the problem properly.

That decision — to design our own torque testing approach — shaped everything that followed.

Designing a Torque Testing Rig for Autonomous Steering

This was honestly one of the most enjoyable parts of QUT Motorsport Design Camp for me.

It felt like going back to basics — like first-year experimentation — except this time the result was going to influence a real design decision on a real car.

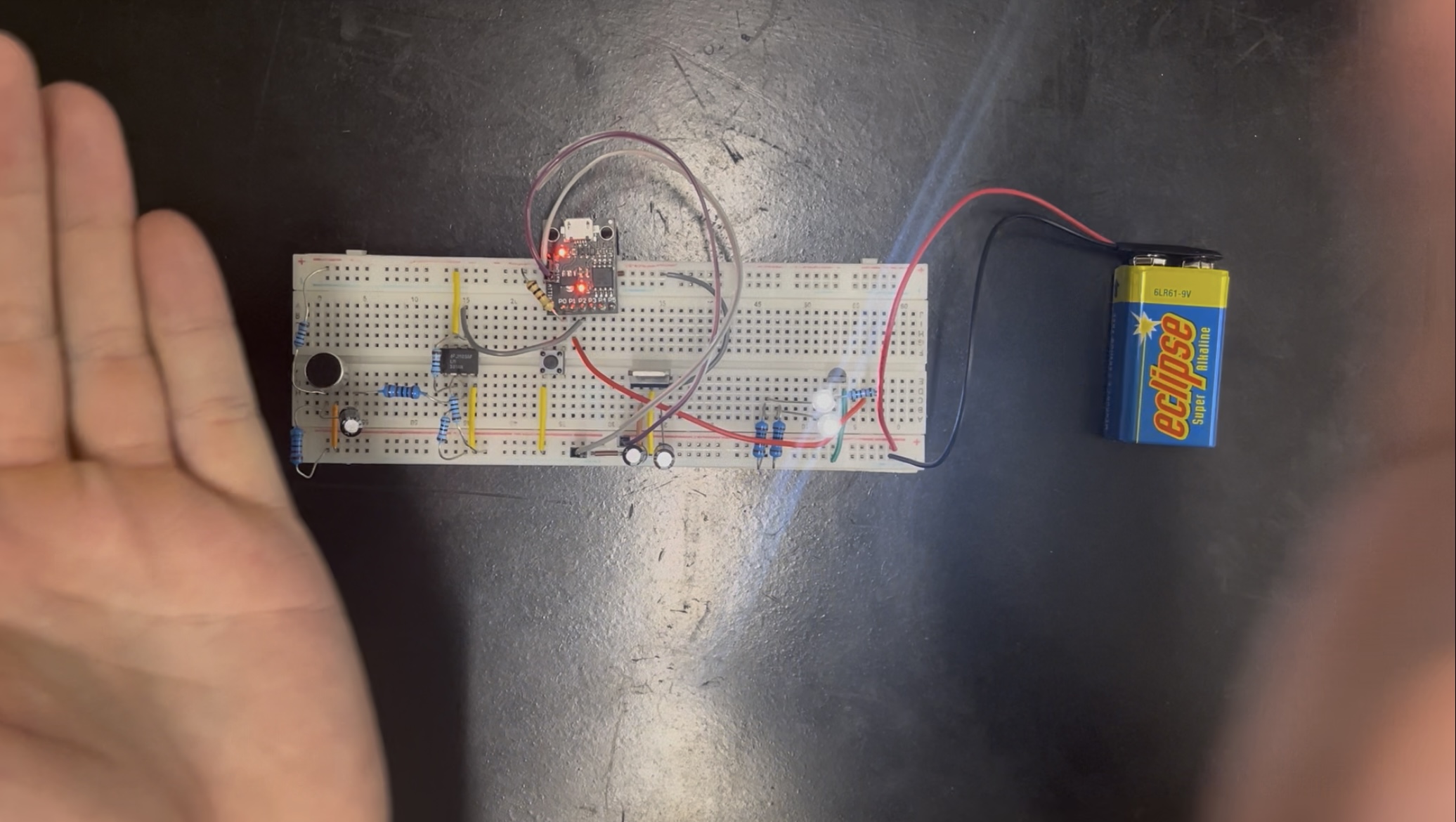

We needed a specific kind of tool to measure torque at the steering wheel, which we didn’t have.

So we built one.

It wasn’t a polished laboratory instrument. It wasn’t going to win any awards for precision. But it was built to answer a specific question:

How much torque is actually required at the steering wheel on track?

As a team, we moved quickly. We sketched, modelled, printed, assembled — and within a short time had a working prototype. Then we found flaws. So we redesigned it. Then refined it again.

That iteration cycle — prototype, test, adjust, repeat — was probably one of the reasons we were able to make meaningful progress in a short window.

It still wasn’t perfect.

But it was ours.

Because we designed it ourselves, we understood its limitations. We measured the spring constants. We refined geometry. We thought carefully about where error could creep in and what margin we needed to apply.

And most importantly, it gave us validated data from our own vehicle, under our own conditions.

That data — even with margins of error — was far more useful than relying on assumptions or loosely comparable numbers from elsewhere.

This stage reinforced something simple but important:

Useful data, even if imperfect, beats clean-looking assumptions every time.

Modelling Meets Reality

We began with analytical modelling to estimate torque requirements based on steering angle and effective loads.

On paper, it looked great.

We had a clean graph. A nice relationship between steering angle and required torque.

But it was still just that — a model.

So we decided to bench test it properly.

We set the steering wheel up in the lab, added weights, recorded the angle response, essentially testing the wheel in a controlled experiment. It felt a bit like going back to first-year labs again.

That’s when we saw the discrepancies.

The relationship was still there. The tool worked. The trend just didn’t match our expectations.

That’s when we realised things like friction were throwing the model off.

But now we could quantify it and account for it properly. That gave us a much more grounded understanding of the actual torque requirements.

Next, safety.

Before taking the rig to the track, we needed to be confident it wouldn’t fail under load. So we ran FEA on the steering wheel and test setup.

Ansys was new territory for me. There was a bit of learning on the fly, and some guidance from the mechanical team along the way. It was a good example of how multidisciplinary teams actually function — leaning on each other’s strengths rather than pretending you know everything.

The results were solid. Structurally, we were in the clear.

At that point, we had something we didn’t have before:

- A validated torque estimate grounded in physical testing

- An understanding of the model’s limitations

- Structural confidence to move into track testing

And that combination made the next stage feel deliberate rather than risky.

Track Testing in Formula SAE Autonomous Systems

Track testing at Lakeside Park added another layer of realism.

There were some kinks getting the car up and running on the day. But for our purposes, we didn’t need a full autonomous run. We just needed a controlled push test to observe steering behaviour under realistic conditions.

We captured the steering movement using a GoPro and later analysed the footage in ImageJ to estimate the torque required from initial movement through to hard lock.

That process gave us something we hadn’t had before — a grounded estimate of real-world steering effort.

From there, we applied a conservative factor of safety based on what we observed on the day. Rather than designing to the exact measured value, we designed above it, accounting for variability in friction, dynamic behaviour, and conditions we couldn’t perfectly replicate in a single test session.

At that point, actuator selection shifted from comparing datasheets to making an evidence-based decision based on our own validated data.

Packaging Constraints in Formula SAE Design

When it came time to select the actuator, performance alone wasn’t the deciding factor.

Based on our validated torque data, one motor stood out. It comfortably met the requirements with solid headroom. On paper, it was the obvious choice.

It was a packaging nightmare.

In Formula SAE design, a few millimetres in diameter can cascade into:

- Structural redesign

- Changes to mounting geometry

- Impacts on steering linkage alignment

- Electrical routing implications

- CAN integration constraints

- Increased manufacturing effort and timeline risk

It wasn’t that the mechanical team “wouldn’t like it.” It was about the system as a whole would face consequences.

That’s when the decision stopped being purely electrical.

As a team, we built a structured decision matrix across mechanical and electrical criteria to weigh the trade-offs properly. We scored torque margin, RPM, weight, integration effort, packaging feasibility, electrical compatibility, and future flexibility.

When everything was weighted, a smaller motor — one that didn’t exactly smash our validated requirements — actually came out ahead.

It didn’t exceed the torque data by a huge margin. It just cleared it.

But it integrated cleanly. It required minimal structural change. It reduced downstream risk.

That was a hard lesson.

Because after all the effort we’d put into validation, part of us wanted to choose the motor that absolutely dominated the data.

Instead, we chose the one that made the most sense for the system.

Re-Evaluating the Decision

After settling on the smaller, pragmatic option, something didn’t quite sit right with me.

It wasn’t that the choice was wrong.

But after all the effort we’d put into modelling, testing, and validation, I couldn’t shake the feeling that we were just scraping in.

There had to be something we were missing.

That didn’t mean jumping back to the oversized motor that caused packaging headaches. It meant stepping back and re-evaluating our options — especially the ones we had dismissed earlier.

We went back through earlier notes. One motor in particular had been rated harshly early in the process based on lack of information and some uncertainty around integration.

When we reached out directly to the supplier for more information, the picture changed.

This motor:

- Exceeded our validated torque requirements with a comfortable margin

- Offered future-proofing without being overkill

- Fit within essentially the same packaging envelope as the smaller motor

- Required no structural redesign

- Integrated cleanly from a mechanical and electrical standpoint

It wasn’t as large or as powerful as our first “big” option — but it didn’t need to be.

It met the requirements. It gave us margin. And it didn’t create cascading integration problems.

Once we looked at it again objectively, it became clear this was the strongest system-level choice.

We updated the report. Adjusted the slides. Refined the recommendation.

That moment stood out to me because it genuinely felt like we had made the right decision.

We hadn’t become attached to our first choice. We hadn’t compromised and chosen a sub-optimal motor just to make everyone’s lives easier. And we didn’t stop even after we’d written the final report. Instead, we stepped back, reassessed everything objectively, and asked whether it was truly the best solution.

When the new option clearly outperformed the others — not just on torque, but on integration and future flexibility — the answer became obvious.

That felt like a defining moment of Design Camp.

What QUT Motorsport Design Camp Taught Me

Beyond actuator specs and torque graphs, QUT Motorsport Design Camp reinforced a few principles:

Validation beats assumption.

Inherited data is a starting point — not a conclusion. Until you measure it under your own conditions, it’s still a hypothesis.

Bench testing simplifies; track testing complicates.

It’s easy to build a clean model. It’s much harder to account for friction, tyre behaviour, and all the messy realities that show up once something is moving.

Packaging is a systems constraint.

What looks like an electrical decision quickly becomes a mechanical, manufacturing, and integration decision. A few millimetres can ripple through an entire design.

Simulation requires discipline.

FEA and modelling are powerful tools — but they don’t replace thinking. If your assumptions are wrong, your results will be precisely wrong.

Engineering is trade-offs.

The highest-performing component on paper isn’t always the best solution. Margin, integration effort, and future flexibility matter just as much as peak numbers.

Strong teams revisit decisions.

If something doesn’t feel fully resolved, it’s worth digging back in. Objectively reassessing and improving a decision isn’t backtracking — it’s good engineering.

At the start of Design Camp, I wasn’t even sure what a steering actuator really involved.

By the end, I had a much clearer appreciation for how quickly a “simple” component choice can expand into a multidisciplinary systems problem.

Final Thoughts on QUT Motorsport Design Camp

We wrapped up QUT Motorsport Design Camp with strong feedback from alumni and academics, which was encouraging.

But what stood out more was the process.

As a team, we:

- Validated steering torque from first principles

- Designed, built, and iterated a custom torque testing rig

- Refined modelling assumptions after physical discrepancies

- Ran FEA to validate structural integrity

- Conducted track testing and applied conservative safety margins

- Built a cross-functional decision matrix

- Revisited and improved our recommendation when better clarity emerged

For a student engineering project, it felt close to a professional engineering practice — not because everything went smoothly, but because it didn’t.

There were assumptions that didn’t hold.

There were compromises that needed rethinking.

There were decisions that had to be reopened.

And that’s what made it worthwhile.